I built a clinical assistant that could summarize patient notes pretty well. Then I asked it about the same patient two weeks later, and it confidently ignored everything it had already seen.

I built a clinical assistant that could summarize patient notes pretty well. Then I asked it about the same patient two weeks later, and it confidently ignored everything it had already seen.

That’s when I realized: I hadn’t built a system. I’d built a stateless loop.

What this system actually does

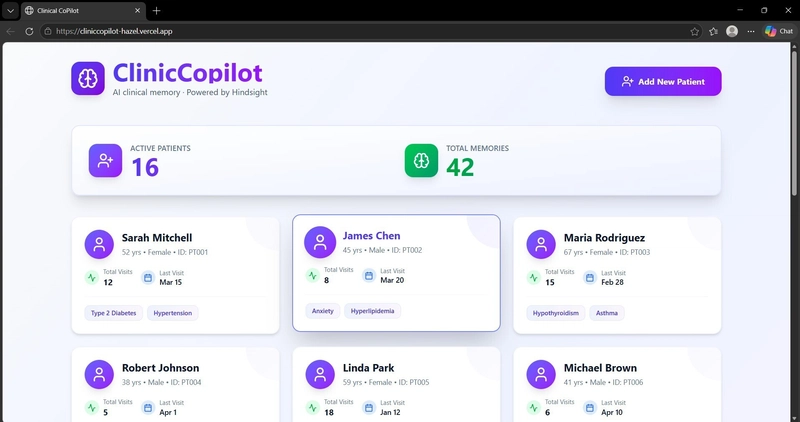

Clinical CoPilot is a memory-first system for primary care workflows. It takes raw visit notes and turns them into structured, recallable memory that persists across time. The goal is simple: before a doctor sees a patient, the system should already know what matters.

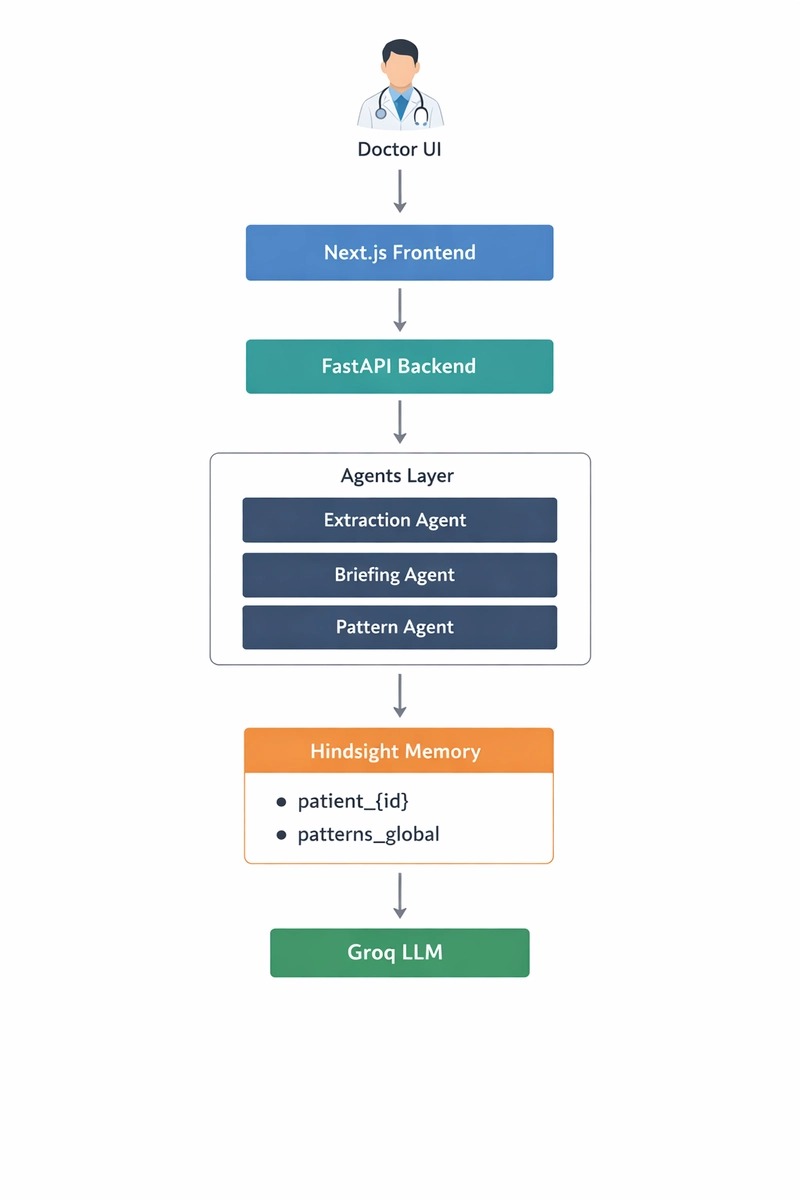

The architecture is straightforward:

A FastAPI backend orchestrates the flow

An extraction agent parses visit notes into structured memory

A memory layer (Hindsight) stores those events

A briefing agent generates pre-visit summaries

A pattern agent identifies trends across patients

Each patient has their own memory thread. Every visit adds new entries. Nothing gets overwritten.

On the frontend, I expose three things:

A patient dashboard

A “Memory Inspector” to visualize stored memory

A briefing panel that shows what the system thinks matters

The UI is intentionally simple. Most of the work happens in how memory is structured and retrieved.

The problem: stateless systems don’t handle time

My first version followed the usual pattern:

Grab recent notes

Dump them into a prompt

Generate output

Something like:

prompt = f"""

Patient history:

{recent_notes}

Summarize key clinical details for the next visit.

"""

This works if “recent” is enough. It usually isn’t.

Real problems:

Medication reactions from older visits disappear

Follow-up commitments get lost

Personal context (job, family, habits) never survives

I kept increasing the context window. It didn’t help. The system wasn’t forgetting because of token limits. It was forgetting because it had no memory model.

I needed a way to give my agent memory

.

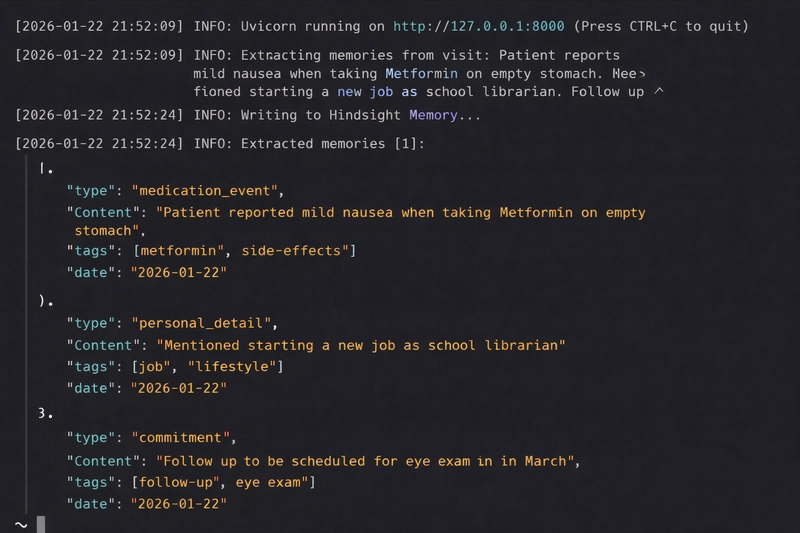

Switching to memory: events instead of text

Instead of storing notes, I started storing events.

Each visit produces multiple structured memories:

Visit summary

Medication event

Personal detail

Commitment

Lab result

Family history

Example:

{

"type": "medication_event",

"content": "Patient reported mild nausea when taking Metformin on empty stomach",

"tags": ["metformin", "side-effects"],

"date": "2026-01-22"

}

This immediately changes how the system behaves:

You can query specific types

You can prioritize certain events

You can explain outputs

The missing piece was persistence and retrieval.

Using Hindsight as the memory layer

I decided to try Hindsight

for agent memory. I also explored the Hindsight agent memory docs

to understand how threads and recall work.

Each patient gets their own thread:

thread_id = f"patient_{patient_id}"

await hindsight.write_memory(

thread_id=thread_id,

memory={

"content": memory.content,

"tags": memory.tags,

"metadata": memory.metadata

}

)

Retrieval is semantic, but scoped:

memories = await hindsight.recall_memories(

thread_id=thread_id,

query="diabetes medication side effects",

limit=10

)

Two things mattered here:

- Threads enforce isolation

Each patient’s history lives in its own namespace. That prevents cross-contamination and keeps retrieval focused.

- Recall is query-driven

Instead of passing everything, I can ask:

“What medication issues exist?”

“What commitments are pending?”

This is much closer to how humans think.

Extraction: where things got painful

Memory is only useful if it’s accurate. The extraction step was the hardest part.

I use an LLM (via Groq) to convert raw notes into structured memory:

response = groq.chat.completions.create(

model="openai/gpt-oss-120b",

response_format={"type": "json_object"},

messages=[{

"role": "system",

"content": "Extract structured clinical memories from the note"

}],

temperature=0.2

)

Problems I ran into:

The model would over-extract trivial details

It would miss implicit commitments

Tags were inconsistent

Fixes:

Strict schema with Pydantic

Explicit examples in the prompt

Post-processing to normalize tags

Extraction is not “set and forget.”

Generating briefings: only as good as memory

Once memory is reliable, briefing becomes straightforward.

The agent pulls:

All patient memories

Relevant cross-patient patterns

memories = await hindsight.get_all_patient_memories(patient_id)

patterns = await hindsight.recall_patterns(query="diabetes risk")

Then generates a structured briefing.

The key difference:

I’m not asking the model to remember. I’m giving it memory.

What the system actually produces

Input note

“Patient reports mild nausea when taking Metformin on empty stomach. Started new job as school librarian…”

Stored memory

Medication event → Metformin nausea

Personal detail → new job

Commitment → schedule eye exam

Later: generated briefing

Today’s Focus

Review A1C improvement

Confirm eye exam scheduling

Assess impact of new job on diet

Suggested opener

“How’s the new librarian role going? Are you finding ways to pack healthy lunches?”

This is where the system stops feeling generic.

Patterns across patients

I also maintain a global memory thread:

thread_id = "patterns_global"

The pattern agent identifies trends like:

Diabetes + hypertension + family history → elevated stroke risk

Frequent visits → better adherence

Lessons learned

- Context is not memory

Passing more tokens is not a substitute for real memory.

- Structure beats embeddings alone

Without structure, you can’t prioritize or explain outputs.

- Extraction quality determines everything

Bad input → bad system. Most improvements came from better schemas and prompts.

- Traceability matters

If the system says something, you should know where it came from.

- Memory needs fallback

External systems fail. Always have a backup.

Closing

I was tired of prompt engineering and started looking for a better way to help my agent remember

.

Using Hindsight

forced me to think differently:

Events instead of text

Threads instead of prompts

Recall instead of context stuffing

Once I made that shift, the system stopped forgetting.

And more importantly, it started behaving like it understood patients across time.

Top comments (0)