BigQuery is a petabyte-scale analytics data warehouse that you can use to run SQL queries over vast amounts of data in near realtime.

Data visualization tools can help you make sense of your BigQuery data and help you analyze the data interactively. You can use visualization tools to help you identify trends, respond to them, and make predictions using your data. In this tutorial, you use the BigQuery Python client library and Pandas in a Jupyter notebook to visualize data in the BigQuery natality sample table.

Objectives

In this tutorial you:

- Set up an environment to run Jupyter notebooks

- Query and visualize BigQuery data using BigQuery Python client library and Pandas

Costs

BigQuery is a paid product and you incur BigQuery usage costs when accessing BigQuery. BigQuery query pricing provides the first 1 TB per month free of charge. For more information, see the BigQuery Pricing page.

Before you begin

Before you begin this tutorial, use the Google Cloud Platform Console to create or select a project and enable billing.

-

Sign in to your Google Account.

If you don't already have one, sign up for a new account.

-

Select or create a Google Cloud Platform project.

-

Make sure that billing is enabled for your Google Cloud Platform project.

- BigQuery is automatically enabled in new projects. To activate BigQuery in a pre-existing project, Enable the BigQuery API.

Setting up a Local Jupyter Environment

In this tutorial, you use a locally hosted Jupyter notebook. Complete the following steps to install Jupyter, set up authentication, and install the required Python libraries.

Run the following command in your terminal to install the latest version of the BigQuery Python client library, including the Pandas library, which is required for functions that use Pandas:

pip install --upgrade google-cloud-bigquery[pandas]Follow the installation instructions in the Jupyter documentation to install Jupyter.

Follow the instructions in the Getting started with authentication page to set up Application Default Credentials. You set up authentication by creating a service account and setting an environment variable.

Overview: Jupyter Notebooks

A notebook provides an environment to author and execute code. A notebook is

essentially a source artifact, saved as a .ipynb file — it can contain

descriptive text content, executable code blocks, and associated results

(rendered as interactive HTML). Structurally, a notebook is a sequence of cells.

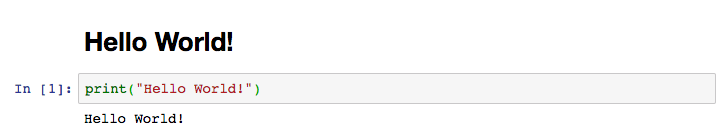

A cell is a block of input text that is evaluated to produce results. Cells can be of two types:

Code cells: contain code to evaluate. Any outputs or results from executing the code are rendered immediately below the input code.

Markdown cells: contain markdown text that is converted to HTML to produce headers, lists, and formatted text.

The screenshot below shows a markdown cell followed by a Python code cell. Note that the output of the Python cell is shown immediately below the code.

Each opened notebook is associated with a running session. In IPython, this is also referred to as a kernel. This session executes all the code entered within the notebook, and manages the state (variables, their values, functions and classes, and any existing Python modules you load).

Querying and visualizing BigQuery data

In this section of the tutorial, you create a Cloud Datalab notebook used to query and visualize data in BigQuery. You create visualizations using the data in the natality sample table. All queries in this tutorial are in standard SQL syntax.

To query and visualize BigQuery data using a Jupyter notebook:

If you haven't already started Jupyter, run the following command in your terminal:

jupyter notebookJupyter should now be running and open in a browser window. In the Jupyter window, click the New button and select Python 2 or Python 3 to create a new Python notebook.

At the top of the page, click Untitled.

In the Rename notebook dialog, type a new name such as

BigQuery tutorial, and then click Rename.The BigQuery Python client library provides a magic command that allows you to run queries with minimal code. To load the magic commands from the client library, paste the following code into the first cell of the notebook.

%load_ext google.cloud.bigquery

Run the command by clicking the Run button or with

SHIFT + ENTER.The BigQuery client library provides a cell magic,

%%bigquery, which runs a SQL query and returns the results as a Pandas DataFrame. Enter the following into the next cell to return total births by year.Click Run.

The query results appear below the code cell.

In the next cell block, enter the following command to run the same query, but this time save the results to a new variable

total_births, which is given as an argument to the%%bigquery. The results can then be used for further analysis and visualization.Click Run.

Now you have a Pandas DataFrame saved to variable

total_births, which is ready to plot. To prepare for plotting the query results, paste the following built-in magic command in the next cell to activate matplotlib, which is the library used by Pandas for plotting.%matplotlib inline

Click Run.

In the next cell, enter the following code to use the Pandas

DataFrame.plot()method to visualize the query results as a bar chart. See the Pandas documentation to learn more about data visualization with Pandas.Click Run.

The chart appears below the code block.

Next, paste the following query into the next cell to retrieve the number of births by weekday.

Because the

wday(weekday) field allows null values, the query excludes records wherewdayis null.Click Run.

In the next cell, enter the following code to visualize the query results using a line chart.

Click Run.

The chart appears below the code block. Notice the number of births dramatically decreases on Sunday (1) and Saturday (7).

Click File > Save and Checkpoint or click the save icon in the toolbar. Creating a checkpoint allows you to roll the notebook back to a previous state.

Pandas DataFrames

Magic commands allow you to use minimal syntax to interact with

BigQuery. Behind the scenes, %%bigquery uses the

BigQuery Python client library to run the given query, convert

the results to a Pandas Dataframe, optionally save the results to a variable,

and finally display the results. Using the BigQuery Python

client library directly instead of through magic commands gives you more

control over your queries and allows for more complex configurations. The

library's integrations with Pandas enable you to combine the power of

declarative SQL with imperative code (Python) to perform interesting data

analysis, visualization, and transformation tasks.

Querying and visualizing BigQuery data using pandas DataFrames

In this section of the tutorial, you query and visualize data in BigQuery using pandas DataFrames. You use the BigQuery Python client library to query BigQuery data. You use the Pandas library to analyze data using DataFrames.

Type the following Python code into the next cell to import the BigQuery Python client library and initialize a client. The BigQuery client is used to send and receive messages from the BigQuery API.

Click Run.

Use the Client.query() method to run a query. In the next cell, enter the following code to run a query to retrieve the annual count of plural births by plurality (2 for twins, 3 for triplets, and so on).

Click Run.

To chart the query results in your DataFrame, insert the following code into the next cell to pivot the data and create a stacked bar chart of the count of plural births over time.

Click Run.

The chart appears below the code block.

In the next cell, enter the following query to retrieve the count of births by the number of gestation weeks.

Click Run.

To chart the query results in your DataFrame, paste the following code in the next cell.

Click Run.

The bar chart appears below the code block.

What's next

Learn more about writing queries for BigQuery — Querying data in the BigQuery documentation explains how to run queries, create user-defined functions (UDFs), and more.

Explore BigQuery syntax — The preferred dialect for SQL queries in BigQuery is standard SQL, which is described in the SQL reference. BigQuery's legacy SQL-like syntax is described in the Query reference (legacy SQL).